Full Case Study- The Problem Space

Aircraft tire inspection is GDTA task 1.3 in a four-part workflow: collect tools - verify pressure - visually examine - document. Task 1.3 - the visual examination - is where human error is most consequential and most likely. A technician must simultaneously perceive tread depth, cuts, cracks, bulges, flat spots, embedded FOD (foreign object debris), wear patterns suggesting misalignment, and any sign of internal casing damage. All of this while physically crouched near the landing gear, often in a loud, dirty hangar environment, cross-referencing a thick FAA manual on a tablet or printout.

The problem isn't intelligence. It's information architecture under pressure.

AI and XR offer a path forward - but only if the system is designed around how technicians actually think, not how engineers imagine they think.

Research Phase: Understanding the Human System

Before touching a single design tool, the team conducted 33 on-site interviews using a snowball sampling method at active maintenance facilities. Interviews were semi-structured, covering three thematic areas: current challenges in visual inspection, specific friction points when using new technology, and technician expectations and resistance toward AI/XR adoption.

The analysis was organized using a Systems Engineering Framework - a structured model that maps work system elements (tools/technologies, organization, tasks, environment, and the technician at the center) to processes (professional work, collaborative work, human-AI interaction) and outcomes (airworthy aircraft, organizational performance, employee wellbeing).

What technicians told us - in their own words:

On the limits of AI vision: "Mechanic would normally put his hand on there and see if anything feels loose. You can't usually visually see looseness. You have to feel for it." This single quote reshaped the entire sensing philosophy of the project. The XR interface couldn't just augment vision - it had to acknowledge what vision cannot do.

On manual information retrieval: "Like in the cargo bay manual, everything's there except the floor, which is under fuselage. You end up looking here, there, everywhere just to find one thing." This confirmed the case for embedded, contextual AI - not a smarter search bar.

On cognitive risk from XR: "You get focused on an area too tightly, and it becomes a myopic inspection." AR overlays, if poorly designed, would make this worse. The design response was Principle 55: enable easy decluttering - hands open and upside down removes all display elements instantly.

On the opportunity: "What I just said instead of sitting in front of a keyboard, talking into something, gets shot right into the system. That'd be a positive." Voice-first AI interaction wasn't a nice-to-have. It was the core modality.

Cognitive Framework: GDTA for Tire Inspection

The Goal-Directed Task Analysis decomposed the overall inspection goal - ensure the safe and airworthy condition of the aircraft tire - into four sub-goals (SG 1.1 through SG 1.4), each with its own decision structure mapped across three levels of Endsley's Situation Awareness model:

- Level 1 (Perception): What data does the technician need to observe? (e.g., calibrated pressure gauge reading, ambient temperature, location of damage, type of defect present)

- Level 2 (Comprehension): What does that data mean? (e.g., does measured pressure fall within acceptable range? does the damage depth require removal from service?)

- Level 3 (Projection): What will happen if no action is taken? (e.g., anticipated risk of failure if damage is not addressed; potential safety impact during taxi, takeoff, and landing)

This three-layer SA model drove every design decision. The AI system's job is not to replace Level 2 and 3 reasoning - it is to ensure the technician has the perceptual inputs (Level 1) they need to form accurate comprehension and projection on their own, with the AI as a structured second opinion.

Interface Design: The AI-XR Inspection System

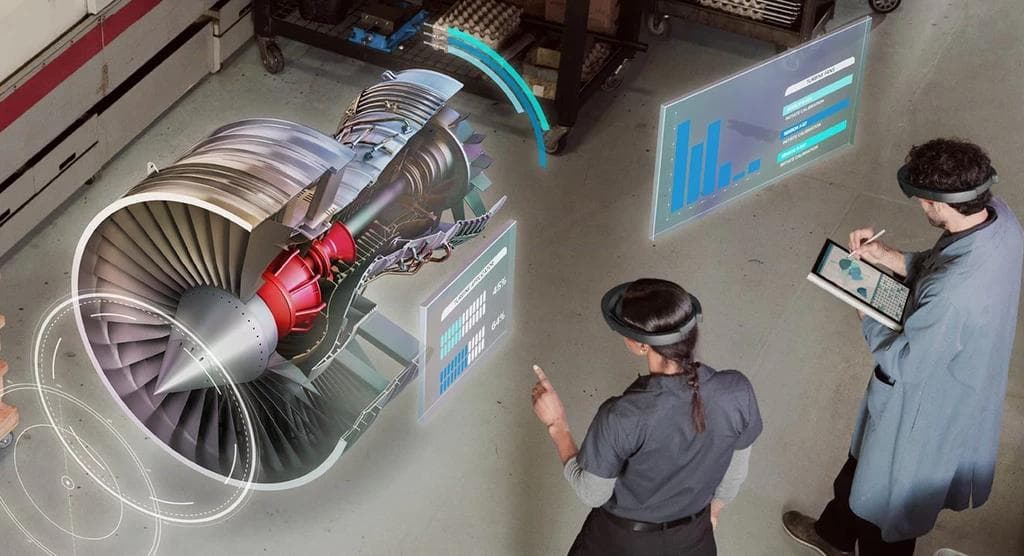

The designed system uses XR glasses (referenced against the Viture Pro XR form factor) to overlay contextual AR information directly onto the tire surface, paired with a voice-based conversational AI layer.

Four principles governed every interface decision:

Principle 52 - Proximity: AR imagery is anchored in close proximity to the relevant real-world feature. Tread depth annotations appear adjacent to the tread, not in a corner HUD. Degrees of freedom are controlled to fix the display in a convenient position relative to the technician's gaze. The goal is zero translation effort between information and the thing being inspected.

Principle 53 - Information Minimization: The display defaults to annotations and speech. Complex diagnostics are surfaced only on request. Geotagged imagery of previous inspection results can be called up via voice. The technician's visual field is not a dashboard.

Principle 54 - Visibility and Salience: When a defect zone is identified - by AI scan or technician tap - the background is dimmed and the defect area is highlighted in a semi-transparent red overlay with an "Action Required" label. The environment is visually isolated from the point of interest. Defects do not compete with hangar clutter for attention.

Principle 55 - Decluttering: A single gestural shortcut (hands open, palms down) removes all AR overlays instantly. This preserves the technician's raw situational awareness when they need to look at the tire without interference. This directly addresses the "myopic inspection" risk identified in the field research.

Adaptive Experience: Novice vs. Expert Mode

One of the research's most consistent findings was the skill bifurcation in the workforce - younger technicians who are digital-native but inspection-inexperienced, and veteran technicians who know the work but resist unfamiliar technology. The interface was designed with an explicit novice/expert fork at session start.

Novice mode activates task guidance proactively. The AI walks the technician through each inspection type - tread depth first, then cuts, then surface damage - displaying reference images for acceptable vs. reject thresholds directly in the AR field. A swipeable virtual image carousel lets the technician compare their tire against known failure patterns. The AI asks follow-up questions and explains the reasoning behind decisions.

Expert mode trusts the technician's knowledge. The AI surfaces manual references and measurement AR overlays without narration. It offers to run the full AI scan and steps back unless called. The voice interaction is terse, confirmatory.

Both modes converge on the same AI-powered scanning workflow: the technician aligns the tire within a rectangular AR target on the display and rotates the tire slowly. The AI maps the surface, flags anomalies, and surfaces findings as annotatable flags the technician can review, edit, and route directly to the automated reporting system with a pinch gesture.

Decision Support and Reporting

After flagging, the system asks: "Do you want to send this to the automated reporting system?" A pinch on the flag number confirms. The interface then loads an editable inspection report pre-populated with the flagged defect type, location, severity classification, and AI recommendation. The technician reviews, edits if needed, and signs off. Documentation time drops from a separate manual process to an inline step within the inspection itself.

Multiple defects can be flagged simultaneously - each assigned a sequential number (Flag 1, Flag 2) and tracked independently through the reporting flow.

Study 2: When Does an AMT Trust AI?

Parallel to the XR interface design, the team investigated a foundational question that the design work raised but couldn't answer alone: how does AI reliability affect human trust and decision-making?

This was studied not in maintenance, but in the adjacent high-stakes domain of Air Traffic Control - a domain where the cognitive structure (human + AI recommendation - decision under time pressure - life-safety consequence) is structurally identical.

The simulation assigned 40 participants to the role of an ATC managing takeoff and landing clearances with an AI recommendation system operating at 60%, 80%, or 90% accuracy. Participants had 30 seconds per decision. Performance was measured across error rate, task completion time, hits, misses, false alarms, and correct rejections.

Key dependent variables: decision-making performance, cognitive workload (NASA-TLX), and trust in AI.

The question the study answers directly informs the AI-XR inspection design: at what reliability threshold does AI assistance become a net positive? What happens to decision quality when a technician over-trusts a system that is wrong 40% of the time? And critically - can XR interface design compensate for moderate AI reliability through better information presentation?

Key Findings

Across both research tracks, six insights will directly shape the next phase of interface development:

1. Shared cognitive work is the mechanism. Effective AI-XR integration doesn't mean AI does more. It means the cognitive load is correctly distributed - AI handles perception augmentation and data retrieval, the technician handles judgment and accountability.

2. Trust requires transparency. Technicians who couldn't trace where the AI's recommendation came from rejected it - regardless of accuracy. Explainability is not a nice-to-have; it is the precondition for adoption.

3. The training data gap is structural. Aviation maintenance data is sparse, proprietary, and rarely digitized. The system's AI needs preprocessing pipelines that can operate on limited labeled data and surface confidence scores alongside recommendations.

4. Hardware friction is underestimated. Visual acuity limitations, headset weight, and comfort in active hangar environments remain the top physical adoption barriers. The interface must degrade gracefully to voice-only on low-visibility days.

5. AI as decision support, not decision maker. Technicians were most receptive to AI as a structured second opinion - surfacing data they might miss, flagging patterns from service history, recommending action. Not as an autonomous verdict.

6. Organizational cost is the ceiling constraint. High deployment and training cost will limit rollout to facilities with regulatory incentive or safety incident history. The design roadmap must include a lightweight, device-agnostic mode that runs on existing tablet hardware.

What's Next

The next phase translates the GDTA and field research into a formal interface specification and prototype for XR headset deployment, with a structured usability evaluation with active AMTs. The AI reliability study results will directly inform where in the interface AI confidence levels should be surfaced, and how the system should behave differently at 60% vs. 90% reliability - dynamically adapting the explainability and override affordances based on system certainty.

The longer arc: move from a single-task inspection tool toward an adaptive, goal-driven agentic AI capable of perception, reasoning, planning, and genuine collaborative work with human technicians across the full maintenance workflow.

NSF Grant Nos. 2326185, 2326186, 2326187. Presented at HFES 69th International Annual Meeting. Clemson University · Purdue University · Southern Illinois University.